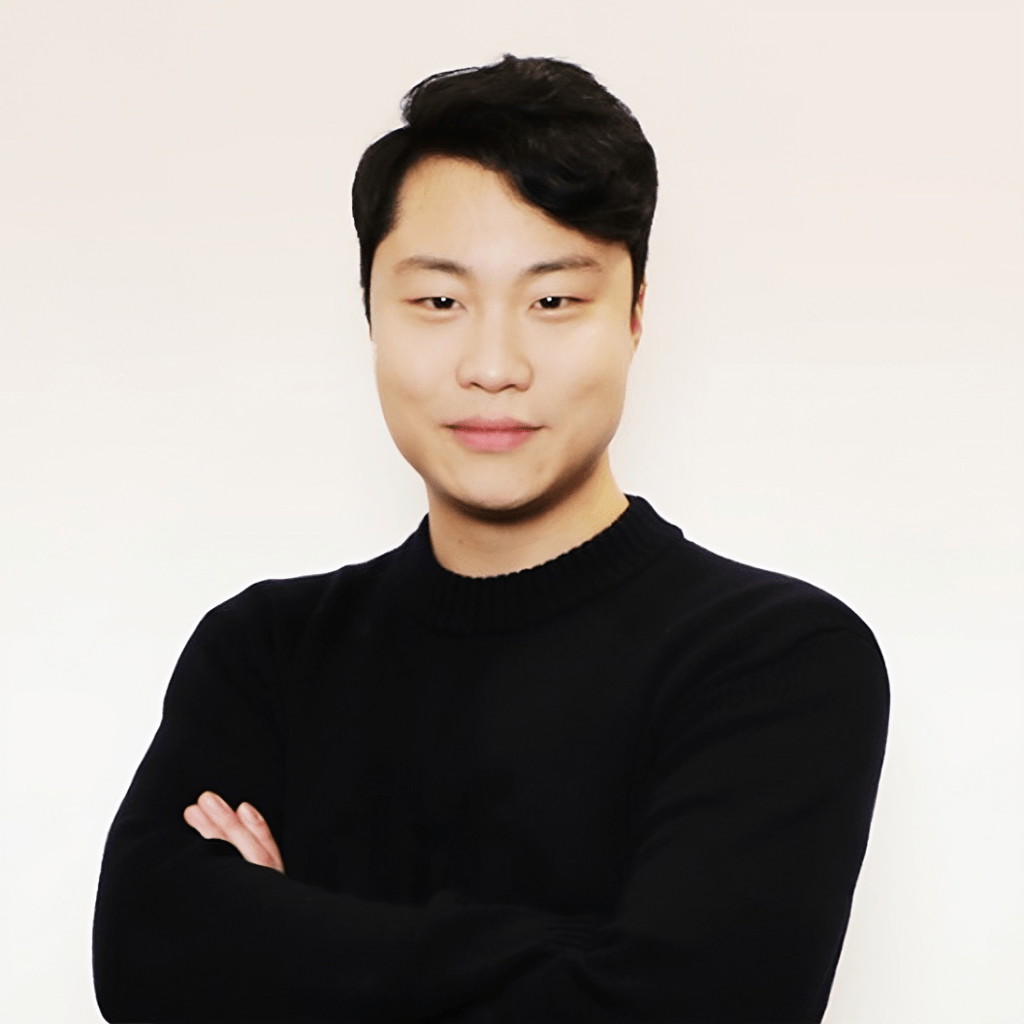

Before he was pitching at AWS re:Invent and speaking at World Economic Forum events, Jae Lee was a data scientist decoding threats for South Korea's Ministry of National Defense. He was good at it - award-winning, in fact. But somewhere between military cyber ops and Silicon Valley, he saw something nobody else wanted to touch: the fact that 80% of the world's data is video, and AI could barely watch a clip without losing the thread.

He co-founded TwelveLabs in 2021 to fix that. Not by wrapping existing models with a prettier API - but by building proprietary video foundation models from the ground up. It was, by his own admission, a painful journey. While competitors moved fast with shortcuts, TwelveLabs spent two and a half, three years doing the hard thing.

That patience looks like strategy now. TwelveLabs' flagship models - Marengo 2.7 and Pegasus 1.5 - can search, classify, and reason across video with what Lee describes as "human-level understanding." Marengo handles multimodal embeddings across 47 languages at 78.5% composite accuracy. Pegasus reasons continuously over up to two hours of video in a single pass. Together, they process over 10,000 hours of content per day at 60 times real-time speed.

The customers range from sports broadcasters to federal intelligence agencies. In-Q-Tel - the CIA's venture arm - is among TwelveLabs' investors, which tells you something about what serious video intelligence looks like in 2025.